Competitor Intelligence: How to Build a Real-Time System Using Public Web Data

68% of B2B sales deals involve at least one direct competitor. The average sales team rates itself 3.8 out of 10 for competitive preparedness. That gap costs organizations an estimated $2–10 million per year in winnable deals — not because the intelligence doesn't exist, but because most teams assume they need a $40K/year platform to access it.

They don't.

Crayon, Klue, and every other enterprise CI platform collect from the same five public data layers anyone can access directly: pricing pages, job postings, SERP rankings, blog sitemaps, and review platforms. The intelligence isn't proprietary. The collection infrastructure is. And that infrastructure — a residential proxy pool that routes automated requests through real home IPs — costs $19/month to start, not $40,000 per year.

This article maps the five public data layers of a complete competitor intelligence system, explains why each layer requires residential proxy rotation to collect reliably at scale, and gives you the architecture to build it yourself.

What Competitor Intelligence Actually Is (And What Most Teams Get Wrong)

A competitor intelligence program is not a tool. It's a system. The tool is what most teams buy when they want the system — and the tool works until the budget gets cut, the contract expires, or someone asks why the platform costs more than the entire content marketing budget.

What is competitor intelligence?

Competitor intelligence is the systematic collection and analysis of public information about competitors — pricing, hiring, content, search rankings, and customer sentiment — to inform strategic business decisions. A complete CI system collects from five public data layers automatically, using residential proxy rotation to avoid rate-limiting blocks on repeated collection runs.

Here are the five data layers:

- Pricing pages — repositioning signals, tier restructuring, feature bundling changes

- Job postings — strategic hiring patterns that precede product launches by 60–90 days

- SERP rankings by location — keyword investment signals and content strategy shifts

- Blog sitemaps — content velocity, topic cluster expansion, update frequency

- Review platforms — customer sentiment, recurring complaints, feature gaps competitors can't close

Most teams default to SaaS platforms without evaluating the alternative. The platforms are real businesses with sales teams, and "we use Crayon" sounds more professional than "we built a Python script." But the data is identical. The collection layer is the only difference — and the collection layer is the part you can own.

The Five Public Data Layers of a Complete CI System

A competitor intelligence system built on public web data collects from five independent layers — pricing pages, job postings, SERP rankings, blog sitemaps, and review platforms. These are the primary competitive intelligence sources that enterprise platforms monitor automatically. Each layer is publicly accessible but individually rate-limited or ASN-blocked for automated collection at scale.

What data sources are used for competitive intelligence?

The five primary public sources are pricing pages, job posting boards, search engine results by location, competitor blog sitemaps, and review platforms like G2 and Trustpilot. Each source is freely accessible. Each requires residential proxy rotation to collect reliably at scale without triggering automated rate-limiting or IP blocks.

A complete competitor intelligence system collects from five public data layers: pricing pages that reveal positioning shifts, job postings that signal strategic hiring, SERP rankings that map keyword territory, blog sitemaps that track content velocity, and review sites that surface customer sentiment. Each layer is publicly accessible. Each requires residential proxy rotation to collect reliably at scale without triggering rate-limiting or IP blocks.

Layer 1: Pricing pages

A competitor's pricing page changes are the most direct signal of repositioning. A shift from per-seat to usage-based pricing isn't an administrative update — it's a strategic move responding to churn pressure or an upstream market push. A new enterprise tier that didn't exist last month signals a go-to-market shift before any press release confirms it.

Layer 2: Job postings

Job boards are the most underused CI source in most programs. When a competitor posts 4 solutions engineer roles with "healthcare compliance experience" in a 6-week window, that's not hiring — that's a vertical expansion signal. Product capabilities take months to build. Hiring precedes launch. The pattern is visible on public job boards 60–90 days before any announcement.

Layer 3: SERP rankings by location

Keyword investment signals are clearest in SERP movement. When a competitor drops from position 2 to 8 on a keyword you share, the first instinct is to celebrate. The smarter move is to check whether they've gained a Perplexity or ChatGPT citation on the same query. In 2026, a competitor can deliberately trade Google clicks for AI engine authority. Both surfaces need monitoring.

Layer 4: Blog sitemaps

For the content intelligence layer specifically — tracking what competitors are publishing, when, and how fast — collecting competitor blog sitemaps with residential proxies gives you a real-time content map that no SaaS tool matches for granularity. A competitor publishing 12 articles on a topic they haven't touched in 18 months is building topical authority ahead of a product launch, not refreshing evergreen content.

Layer 5: Review platforms

G2, Trustpilot, and Capterra are public sentiment databases updated daily by your competitors' customers. Three consecutive reviews mentioning the same missing integration is a product roadmap signal. Either the competitor is about to build it, or they can't. Either answer is a sales positioning opportunity.

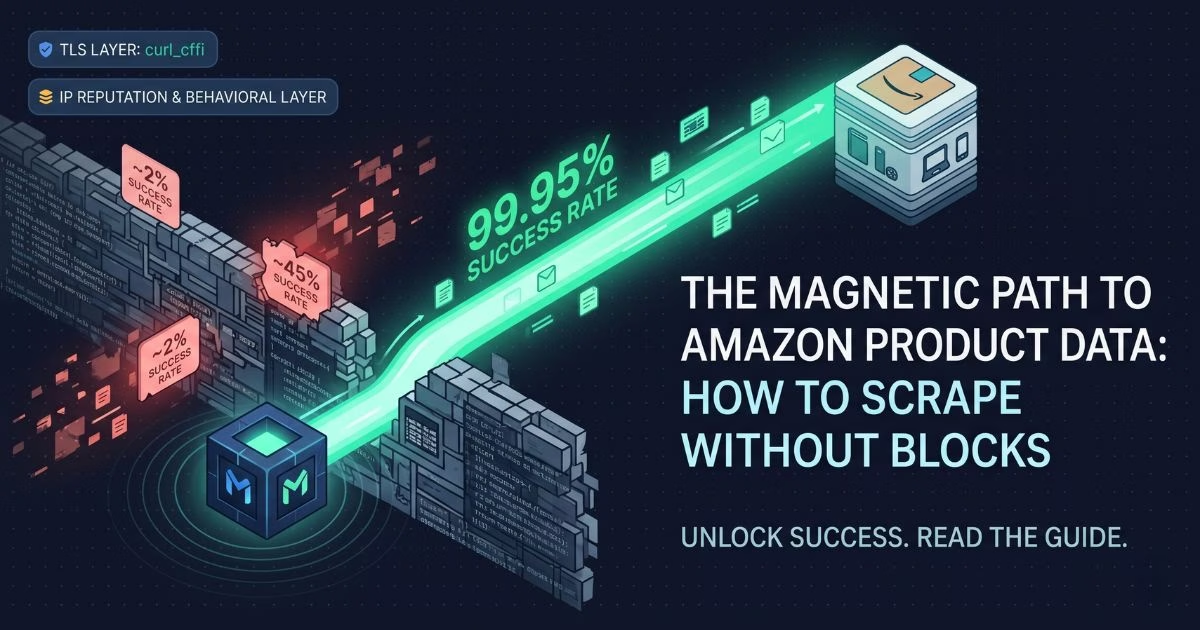

Magnetic Proxy's residential rotating network achieves a 99.95% average success rate and 0.6-second average response time when collecting from all five CI data layers simultaneously. (Source: Magnetic Proxy, 2026)

Why Each Layer Breaks Without Residential Proxy Rotation

The five CI data layers are publicly accessible. The collection problem isn't legal — it's technical. Anti-bot systems don't evaluate the intent behind a request. They classify traffic by ASN: the Autonomous System Number that identifies which network an IP address belongs to.

A datacenter IP registered to AWS, Google Cloud, or DigitalOcean carries an ASN that platforms pre-flag before a single request completes. It doesn't matter what the request asks for. The origin is enough.

Residential IPs registered to ISPs — Comcast, AT&T, Spectrum — carry the behavioral history of genuine home users. That history is what passes platform-level bot scoring across all five layers.

Competitive intelligence collection breaks at scale because the data sources that matter most — pricing pages, job boards, review platforms, and SERPs — actively block repeated automated requests from the same IP. Residential proxies solve this by routing each collection request through a real home internet connection, making automated monitoring indistinguishable from organic browsing. Magnetic Proxy's network achieves a 99.95% average success rate across all five CI data layers.

Here's where each layer fails specifically without residential proxy rotation:

Pricing pages on most SaaS platforms use Cloudflare protection that blocks datacenter ASN ranges by default. Three requests from the same IP in 60 seconds triggers a rate limit. Residential IPs pass because they match organic browsing patterns.

Job boards — specifically Glassdoor and Indeed — block datacenter ranges for automated collection entirely. SERP tracking requires geo-targeted residential IPs: the results a user in Chicago sees are different from what a server in a New Jersey datacenter returns. Both layers fail without city-level residential geo-targeting.

Review platforms like G2 use Cloudflare protection that serves residential traffic and blocks datacenter ranges before content loads. Same mechanism, same fix.

Here is a working collection script routing requests across all five CI data layers through Magnetic Proxy's residential pool:

# Python

# Competitor intelligence collector — routes requests through rotating

# residential IPs to avoid rate-limiting across all five CI data layers

import requests

# Residential proxy configuration — each request gets a different home IP

proxy_config = {

"http": "https://customer-youruser-cc-us:yourpassword@rs.magneticproxy.net:443",

"https": "https://customer-youruser-cc-us:yourpassword@rs.magneticproxy.net:443"

}

# Define the five CI data layer targets for one competitor

ci_targets = {

"pricing_page": "https://competitor.com/pricing",

"job_postings": "https://www.indeed.com/cmp/competitor/jobs",

"sitemap": "https://competitor.com/blog-sitemap.xml",

"g2_reviews": "https://www.g2.com/products/competitor/reviews",

"serp_check": "https://www.google.com/search?q=competitor+alternative",

}

for layer, url in ci_targets.items():

response = requests.get(url, proxies=proxy_config, timeout=10)

print(f"{layer}: {response.status_code} — {len(response.content)} bytes")

# Output: HTTP 200 responses across all five targets,

# each routed through a different residential IP automaticallyAll five requests route through different residential IPs in the same collection run. The target servers see five different home users. The collection completes without a single block.

If you're running collection across more than 3 competitor domains simultaneously, a residential proxy pool is the piece that keeps it from breaking. Magnetic Proxy's 30 GB plan at $57/month covers a full CI stack across 10 competitors without running out of bandwidth.

⚠️ Important: The five CI data layers are all publicly accessible. Collecting public web data is lawful in most US jurisdictions, supported by rulings including hiQ Labs v. LinkedIn (9th Circuit, 2022). The ethical standard, as defined by SCIP (Strategic and Competitive Intelligence Professionals), requires using only public information and never accessing password-protected systems. (Source: SCIP, Strategic and Competitive Intelligence Professionals Code of Ethics, 2024). Everything in this system meets both bars.

Reading the Signals — What Each Layer Actually Tells You

Collecting data is step one. Interpreting it is where most teams stop progressing. Here's what each layer tells you when you know what to look for.

Pricing page signals

A competitor restructures their pricing from per-seat to usage-based over three months. Read as a single change, it's an administrative update. Read as a pattern — especially alongside new enterprise tier additions and removal of the cheapest plan — it's a market repositioning signal. They're moving upstream, trying to improve net revenue retention, or both. Your sales team needs to know before it shows up in a competitor's pitch deck.

Job posting signals

A team running weekly job board collection noticed 8 consecutive postings from a SaaS competitor: enterprise account executives, all with "financial services vertical experience" as a required qualification. The pattern emerged over 6 weeks. The team adjusted their own sales targeting to accelerate enterprise financial services outreach before the competitor's vertical launch. They closed 3 financial services deals in the response window. The announcement came 11 weeks after the first job posting. The intelligence came entirely from a public job board, collected via a residential proxy rotation on a weekly schedule.

SERP signals

In 2026, buyers are asking ChatGPT, Gemini, and Perplexity "what's the best [your category] tool?" before they ever visit your website. Tracking how AI describes your brand vs. competitors is the new battleground. A competitor dropping on Google while gaining AI engine citations is not losing — they're pivoting. SERP monitoring that only tracks Google rankings in 2026 misses half the picture.

Sitemap signals

A competitor publishes 12 articles on AI automation in a 6-week window after 18 months of silence on that topic. That's not a content refresh. It's a content cluster expansion ahead of a product launch. The articles build topical authority. The product follows. Sitemap polling caught the signal 6 weeks before the product page appeared.

Review platform signals

Three consecutive G2 reviews from enterprise customers mention the same missing Salesforce integration. That's a product roadmap signal: either the competitor is about to build it, or they've decided not to. If they're about to build it, accelerate your own integration. If they can't, your sales team has a talking point. The data is public, updated daily, and sitting on G2 waiting to be read.

Enterprise CI platforms like Crayon and Klue cost $20,000–$40,000 per year. They collect from the same five public data layers a self-built system uses. The difference is not data access — it's the infrastructure layer underneath. A residential proxy pool starting at $19/month provides the same collection capability that powers enterprise CI platforms, without the platform cost sitting on top of it.

Building the System — A Three-Component Architecture

The system has three components. Each runs on a schedule. Each produces a specific output. Together they give you real-time CI without a platform subscription.

Component 1: Collection layer (weekly)

A web scraping script polls each competitor's pricing page, blog sitemap, job board profile, and G2 listing on a weekly schedule. All requests route through Magnetic Proxy's residential endpoint to avoid blocks. Output: raw HTML and XML files timestamped and stored in a structured folder by competitor and date.

# Weekly CI collection run — pulls all five data layers for each competitor

import requests, os

from datetime import datetime

proxy_config = {

"https": "https://customer-youruser-cc-us:yourpassword@rs.magneticproxy.net:443"

}

competitors = {

"competitor_a": {

"pricing": "https://competitor-a.com/pricing",

"sitemap": "https://competitor-a.com/blog-sitemap.xml",

"reviews": "https://www.g2.com/products/competitor-a/reviews",

}

}

timestamp = datetime.now().strftime("%Y-%m-%d")

for name, targets in competitors.items():

os.makedirs(f"ci_data/{name}/{timestamp}", exist_ok=True)

for layer, url in targets.items():

response = requests.get(url, proxies=proxy_config, timeout=10)

with open(f"ci_data/{name}/{timestamp}/{layer}.html", "wb") as f:

f.write(response.content)

# Output: timestamped HTML files per competitor per layer,

# stored locally for weekly diff comparisonComponent 2: Signal layer (weekly)

A comparison script diffs the current week's collected files against the previous week. It flags: new URLs in the sitemap, HTML changes on the pricing page above a character threshold, and new review text. Output: a plain-text change log with timestamps and competitor labels. Takes 2 minutes to run after collection completes.

Component 3: Intelligence layer (monthly)

A human review of the cumulative signal log. Job posting patterns reviewed monthly — count by role type, location, and seniority to identify vertical expansion signals. SERP movement reviewed monthly against the two-surface baseline (Google and AI engine citations). Output: a one-page competitive brief covering each monitored competitor. Distribution: leadership team, sales, and product.

Magnetic Proxy's rotating residential proxies provide the collection layer — starting at $19/month. Connect your first script to the endpoint in under 10 minutes.

The Gap Between Knowing and Acting Is an Infrastructure Problem

The data that enterprise competitor intelligence platforms sell access to is publicly available. The gap is not data access — it's collection infrastructure. Residential proxy rotation is what makes automated CI monitoring reliable at scale: it routes each request through a real home IP, distributes collection across a rotating pool, and makes systematic competitor monitoring indistinguishable from organic browsing to the platforms that protect the data.

A self-built competitor intelligence program built on five public data layers and a residential proxy pool delivers the same intelligence that $40K/year platforms deliver. The difference is who owns the collection layer. Owning it means the system runs when budgets tighten, scales when the competitive landscape grows, and costs a fraction of the alternative.

See Magnetic Proxy's residential proxy plans and start your first collection run.

Frequently Asked Questions

Check the most Frequently Asked Questions

What is competitor intelligence?

What is the difference between competitive intelligence and competitor intelligence?

How do you gather competitor intelligence?

How do you build a competitor intelligence program?

What are the best sources for competitor intelligence?

Latest Posts

Here’s how Profile Peeker enables organizations to transform profile data into business opportunities.